Google has fixed the issue of typo as part of crawler documentation changes, which has inadvertently misidentified one of the crawlers. In general, it may seem to be a minor issue, for SEO or publishers who are dependent upon typo correction in SEO to set firewall rules. A failure to negotiate the correct data will cause the website to advertently block legitimate Google crawler updates

Google inspection tool

The typo is in the section of the documentation about Googlebot improvements. It happens to be among the important Google crawler updates that are sent out to a website in response to one or two prompts

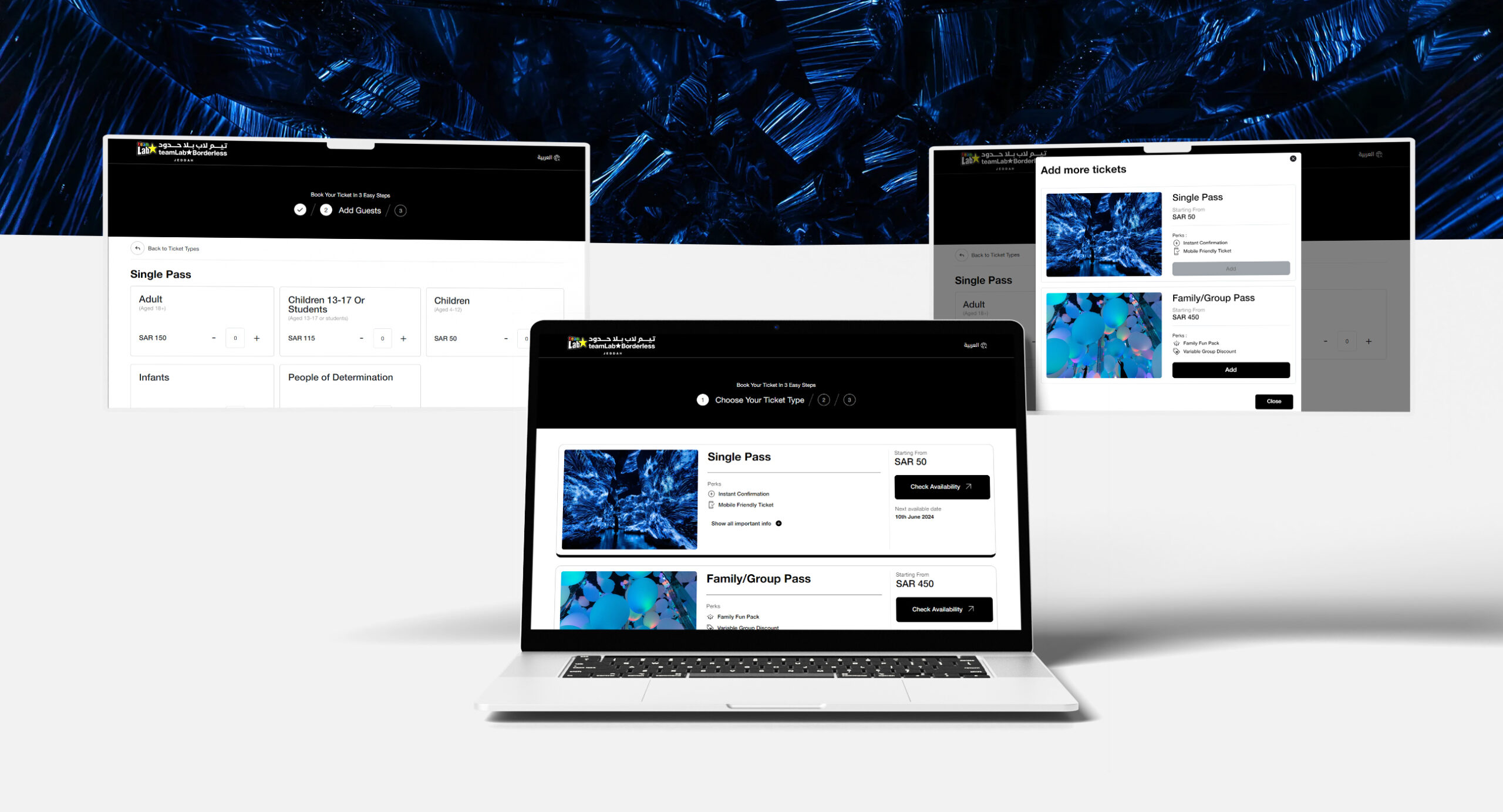

URL inspection functionality in the search console

When a user wants to check within the search console whether a webpage requires indexed or necessitates indexing, the Google system responds with crawler documentation changes. The URL inspection tool provides the following functionality

- In Google Index see the status of a URL

- Inspect a live URL

- View a rendered version of the page

- View JavaScript output, loaded resources and other pages.

- Request indexing of a URL

- Troubleshoot a missing page

- Learn about your canonical page

Rich test results

A test to check out the validity of the structured data. The essence of the test is to check if it qualifies for an enhanced search result referred to as the rich result. Opting for this test will trigger a specific Google crawler update to fetch the webpage and analyze the structured data.

Why is crawler user agent typo error prone?

This may turn out to be a troublesome matter for websites situated behind a paywall. It is within whitelist-specific robots in the form of Google improvements.

Improper user identification can turn out to be a problem if the CMS has to block the crawler with robots. Txt or robots meta directive. It presents Google crawler documentation changes from discovering pages it should not be looking at.

Such forum content management systems remove links to parts of the site like the user registration page, user profiles and the search function to prevent bots from indexing those pages.

The onus to spot person agent typo

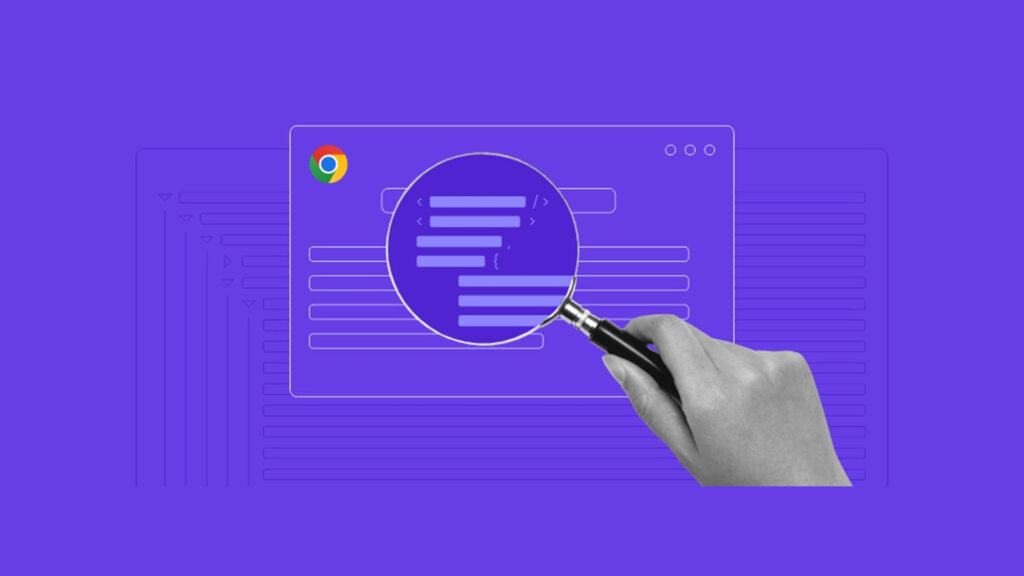

The difficulty concerned a tough to catch a typo within the person agent description. The distinction can be found in the following manner:

Mozilla/5.0 (compatible; Google-Inspection Tool/1.0)

New version:

Mozilla/5.0 (compatible; Google-Inspection Tool/1.0;)

Be sure to update relevant robots. Txt, CMS code or robot’s directive if you along with your client are whitelisting Google crawler updates or blocking crawlers from certain pages. It is better if you compare the original version with the updated version. Though the details may be small but it can make a major difference.

For more such blogs, Connect with GTECH.

Related Post

Publications, Insights & News from GTECH